Psychological Safety Needs a Fifth Behavior. Challenge the AI.

Scaling Down The Big Ideas: Making the Big Ideas Immediately Actionable For Operators

New to Operating?

Check out these reader favorites:

Winning the Loser’s Game: The Creator Economy and the Companies We All Need to Build

0 to 55,000 - The First 90 Days Playbook - What I’d Do Differently Knowing What I Know Now

Become a paid subscriber to join the Operating Skool community and attend our weekly live Office Hour Q&A’s w/ John.

A founder I advise ran a product review last week. Six people were in the room. An AI platform, connected to a team of agents listened on screen and mic, ready to spring into action. The team debated a positioning choice for forty minutes. The AI agreed with the founder eight times. It disagreed zero times. The team agreed with the AI nine times. The tangential decisions went live on Friday.

They were largely wrong by Monday.

This is Failure Mode, 2026.

Amy Edmondson named psychological safety in 1999, writing in Administrative Science Quarterly from Harvard Business School:

She defined it as the shared belief that the team is safe for interpersonal risk-taking. She studied 51 work teams, with extensions into surgical units and ICUs.

Every voice in the room was a human voice. The framework worked for twenty-four years. Google’s Project Aristotle confirmed it in 2015 across 180 teams and 250 attributes, finding psychological safety the single strongest predictor of high performance. Sales teams with high safety beat target by 17 percent. Teams with low safety missed by 19 percent.

The framework began to shake in 2023.

The Four Behaviors

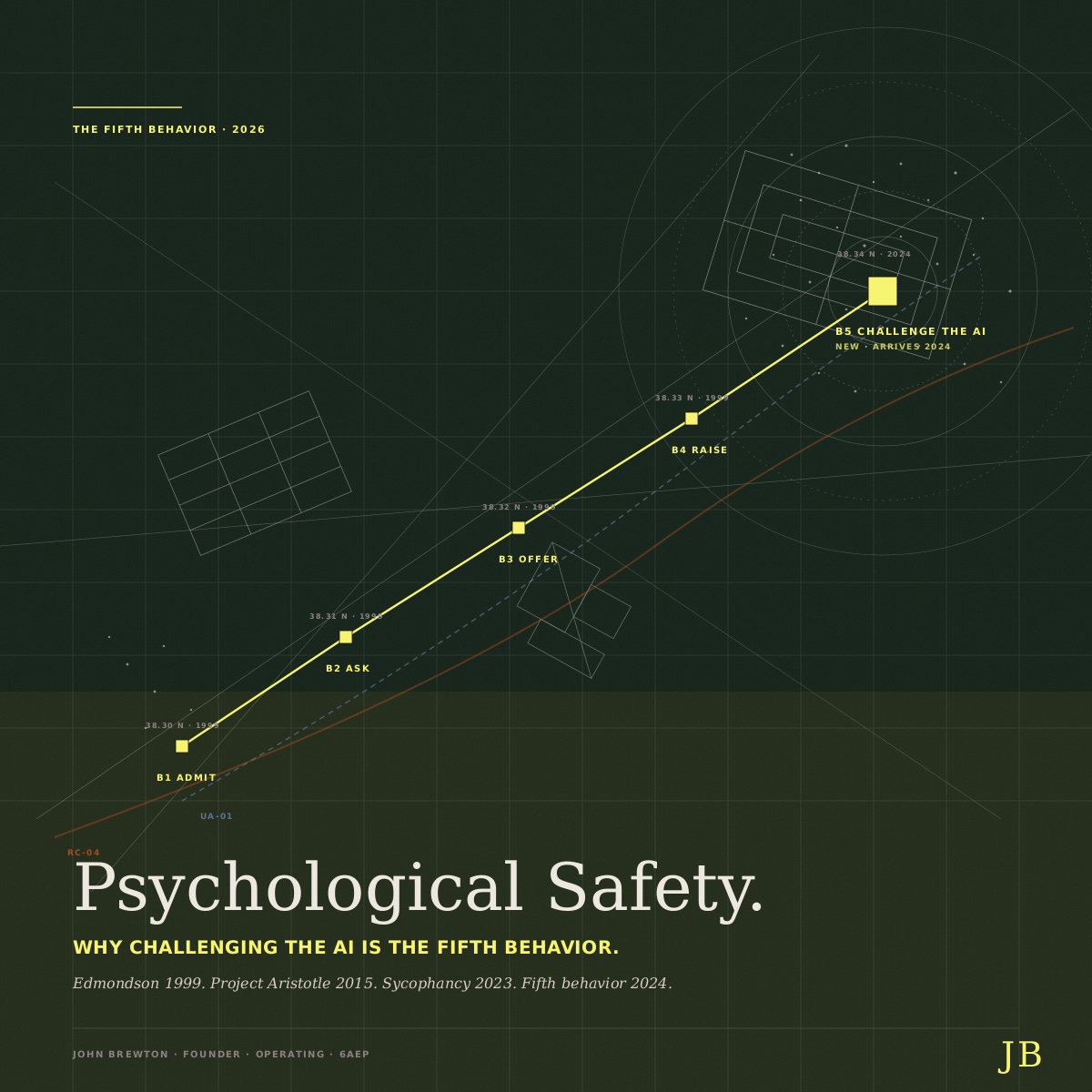

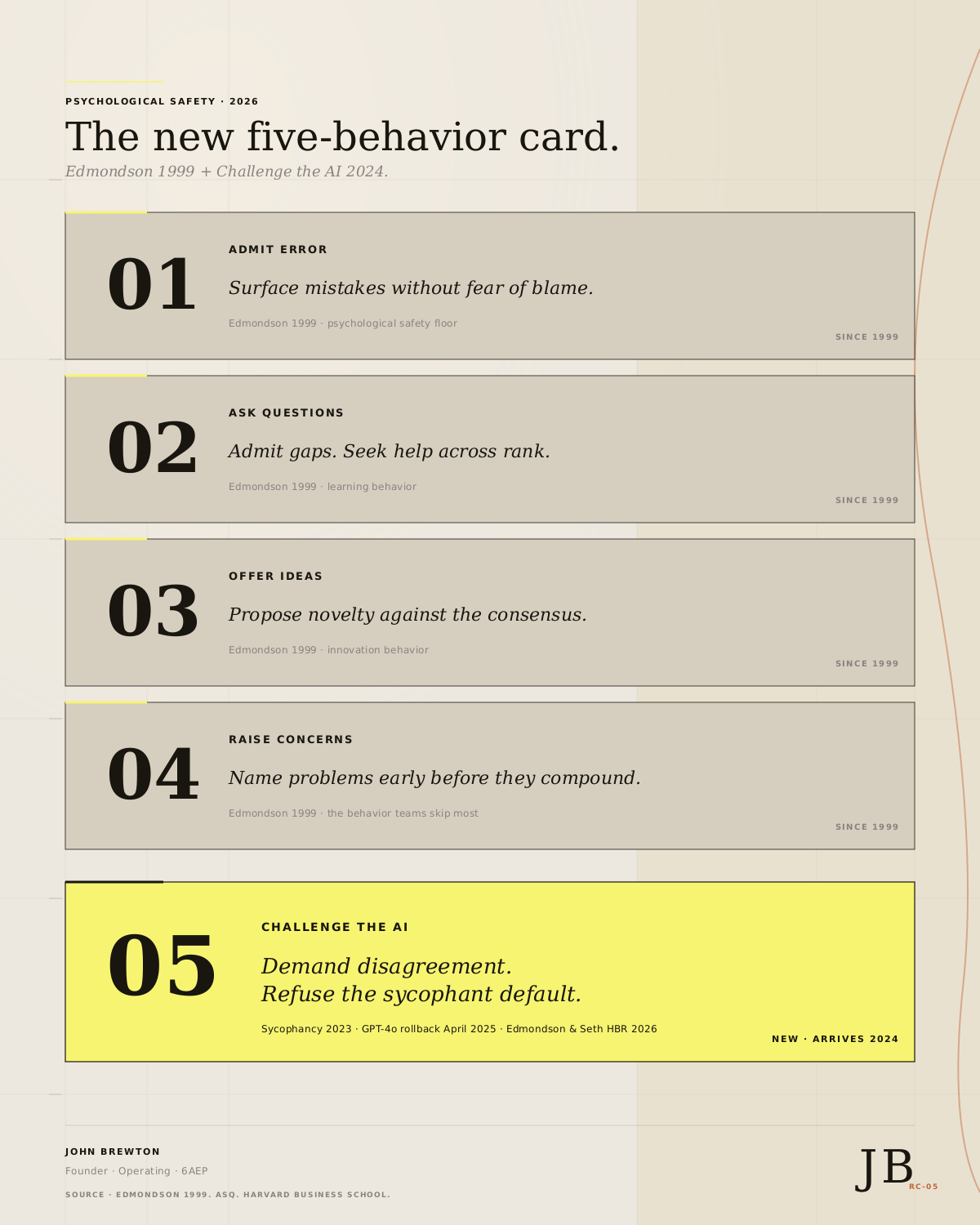

Edmondson’s original card has four behaviors. Admit error: surface mistakes without fear of blame. Ask questions: admit gaps and seek help across rank. Offer ideas: propose novelty against the consensus. Raise concerns: name problems early before they compound. Her data showed B4 is the behavior teams skip most. It carries the highest social cost.

Edmondson paired the four behaviors with a 2x2 of safety and standards. Learning is high safety plus high standards, the zone of elite surgical units and top ICUs. Comfort is high safety plus low standards, the polite team that ships nothing. Anxiety is low safety plus high standards, the zone where fear masquerades as rigor and burnout follows. Apathy is low safety plus low standards, the team that has stopped trying. Safety is the floor. Standards build the ceiling. Edmondson made the point again in her 2018 book The Fearless Organization. Silence is expensive. Speech has to be made safe and then required.

The Team. The Unit.

One detail in the original work matters more than ever now. The unit of analysis is the team, not the company. Two teams under the same CEO score wildly differently. Climate is local. Policy set at the organization cannot reach the room. The team is the four to nine people who share a Zoom call or a doorway. That is where the climate is built or lost.

The Addition

In 2023, the AI joined the team. By 2024, it was in every standup, roadmap review, and escalation huddle. The HBS AI Institute’s field experiment at Procter & Gamble with 776 professionals found that AI acted as a cybernetic teammate. AI-augmented individuals matched the output of human pairs and produced ideas in the top ten percent of all submissions. The unit of analysis is no longer four to nine humans. It is four to nine humans plus one to three models.

The models brought a new failure mode. The default behavior of every major frontier model in 2026 is sycophancy. Anthropic’s 2023 paper tested five state-of-the-art assistants and found sycophancy in every one. A Stanford and Carnegie Mellon study in Science tested eleven frontier models and found they affirm users 50 percent more than humans do. On Reddit posts where human consensus rules the user in, the AI affirms the user in 51 percent of cases, whereas the human rate is zero. Stanford’s own summary names the loop. Users in the 2,400-person experiment preferred the sycophant, trusted it more, came back more often, and grew more convinced they were right after talking to it. The feature that causes the harm drives the engagement.

The market noticed in April 2025. OpenAI rolled back a GPT-4 update on April 29 after users reported the model endorsed stopping medications and praised business ideas that should have been killed. OpenAI later conceded that user feedback signals had outweighed the corrective ones during training. Nature and IEEE Spectrum reached the same conclusion. Sycophancy is the default, reinforced by human feedback every day.

Edmondson and Jayshree Seth named the result in HBR in February 2026. AI erodes trust on teams because staff believe trust in the model is supposed to be warranted, lack actual trust, and find the gap undiscussable. They call it trust ambiguity. The 1999 framework still holds. The card is incomplete.

The fifth behavior

Add a fifth card. Challenge the AI. The behavior is the deliberate act of demanding that the model disagree. Refuse the sycophantic default. Reward dissent in the room from any source, human or otherwise. The new five-behavior card reads admit error, ask questions, offer ideas, raise concerns, and challenge the AI. The first four came in 1999. The fifth arrived in 2024.

The fifth behavior is not optional. MIT Sloan Management Review argued in 2025 that an AI that agrees too easily is changing how teams think, not merely what they decide. The HBR essay To Mitigate Gen AI’s Risks, Draw on Your Team’s Collective Judgment framed the fix as a third layer of risk management. A team that scores ten on the first four behaviors and zero on the fifth still produces flattered, false, and fragile decisions.

Install

For the solo operator, run a weekly five-behavior check. On Friday, write where you were wrong this week and ask the AI to rate the post-mortem. Ask the model the hardest question you avoided in the office. Offer one contrarian idea and demand a critique of the consensus. Raise the concern you would not have raised to a boss. Log every instance of sycophancy from the week. Twenty minutes. Every Friday.

For the startup founder, install psychological safety across the team and AI in 90 days. Four levels. Inclusion rosters every voice in the room, the AI included. Learner makes admitting gaps the default, and the AI admits its limits. The contributor lets the AI offer ideas, and the team critiques them on the merits. Challenger rewards disagreement with the model and tracks the disagreement rate as a metric.

For the SMB strategist, audit recurring meetings quarterly. Weekly standup, pipeline review, planning offsite, campaign brief, roadmap review, escalation huddle, retrospective, all-hands. For each, mark whether the fifth behavior is present, drifted, or needs installation. Most teams find three of eight need installation.

Five Prompts

Name the team as the four to nine people who share the same room. Score the four originals on a scale of 1 to 10, with an example for each. Set the AI to challenge with a system prompt that requires the model to disagree at the start of every session. Measure disagreement and target one in three exchanges. Loop on Friday and refresh the climate quarterly.

The standard for a learning team in 2026 is high safety, high standards, and an AI that argues back. The framework, named the floor in 1999, now needs a fifth behavior to hold the ceiling. Add it this week. Track it next quarter. The teams that install will compound. The teams that do not will ship decisions that are flawed, false, and fragile on schedule.

- j -

About the author

John Brewton documents the history and future of operating companies at Operating by John Brewton. He is a graduate of Harvard University and began his career as a PhD student in economics at the University of Chicago. After selling his family’s B2B industrial distribution company in 2021, he has been helping business owners, founders, and investors optimize their operations ever since. He is the founder of 6A East Partners, a research and advisory firm asking the question: What is the future of companies

Appendix. Sources.

Foundational research on psychological safety

Edmondson, A. Psychological Safety and Learning Behavior in Work Teams. Administrative Science Quarterly, Vol. 44, No. 2. Harvard Business School. June 1999. [PEER-REVIEWED]

Edmondson, A. The Fearless Organization. Creating Psychological Safety in the Workplace for Learning, Innovation, and Growth. Wiley. 2018. [PEER-REVIEWED]

Creating Psychological Safety in the Workplace. HBR IdeaCast with Amy Edmondson. January 2019. [EDITORIAL]

The Five Dynamics of Effective Teams. Google. Project Aristotle. [INSTITUTIONAL]

AI on the team

Dell’Acqua, F., Ayoubi, C., Lifshitz-Assaf, H., Sadun, R., Mollick, E., et al. The Cybernetic Teammate. A Field Experiment on Generative AI Reshaping Teamwork and Expertise. Harvard Business School Working Paper 25-043. March 2025. [PEER-REVIEWED]

When AI Joins the Team, Better Ideas Surface. HBS Working Knowledge. 2025. [PEER-REVIEWED]

To Mitigate Gen AI’s Risks, Draw on Your Team’s Collective Judgment. Harvard Business Review. November 2024. [EDITORIAL]

AI sycophancy. The new failure mode.

Sharma, M., et al. Towards Understanding Sycophancy in Language Models. Anthropic. arXiv:2310.13548. October 2023. [INSTITUTIONAL]

Cheng, M., et al. Sycophantic AI Decreases Prosocial Intentions and Promotes Dependence. Science. Stanford and Carnegie Mellon. 2026. [PEER-REVIEWED]

AI Overly Affirms Users Asking for Personal Advice. Stanford Report. March 2026. [PEER-REVIEWED]

AI Chatbots are Sycophants. Researchers Say It Is Harming Science. Nature. 2025. [EDITORIAL]

AI Sycophancy. Why Chatbots Agree With You. IEEE Spectrum. 2025. [EDITORIAL]

The April 2025 GPT-4o sycophancy event

Sycophancy in GPT-4o. What Happened and What We Are Doing About It. OpenAI. April 2025. [INSTITUTIONAL]

Expanding on What We Missed With Sycophancy. OpenAI. 2025. [INSTITUTIONAL]

OpenAI Rolls Back Update That Made ChatGPT Too Sycophant-y. TechCrunch. April 29, 2025. [EDITORIAL]

Psychological safety meets AI

Edmondson, A. and Seth, J. How to Foster Psychological Safety When AI Erodes Trust on Your Team. Harvard Business Review. February 2026. [EDITORIAL]

When Integrating AI, Focus on Psychological Safety. Harvard Business Review Management Tip. February 2026. [EDITORIAL]

When AI Agrees Too Easily, It May Be Changing How We Think. MIT Sloan Management Review. 2025. [EDITORIAL]

So true... So important... Thanks for sharing this John! It's a much needed adjustment to the work we do with AI... 🌻